Apache Hadoop is opensource framework for Storage & Processing purpose. For Storage purpose HDFS(Hadoop Distributed File System) and for Processing purpose(Map Reduce) using. Here is some Hadoop admin commands for beginners.

Hadoop Admin Commands:

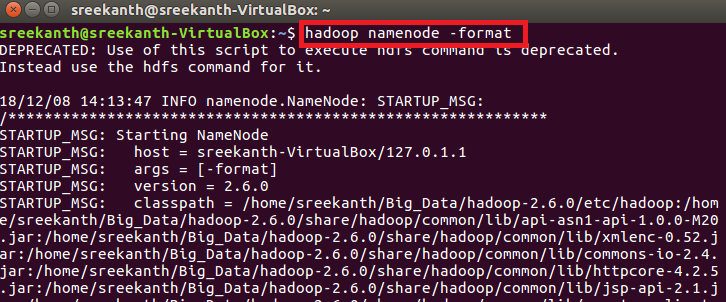

hadoop namenode -format:

In this command explain about format HDFS file system from Nam node in a cluster.

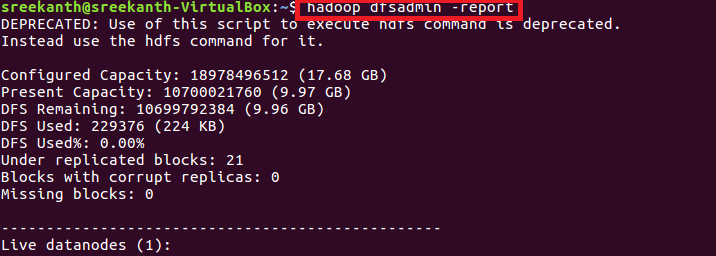

hadoop dfsadmin -report :

In this command showing report on the overall HDFS file system. This command very useful for how much disk is available , Name node information, how many Data Nodes are running and corrupted blocks are in a cluster.

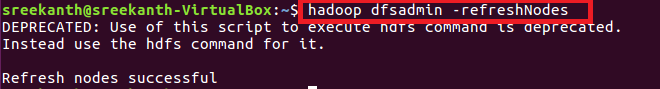

hadoop dfsadmin -refreshNodes:

This commands used commission or decommission nodes

Safe mode commands in Hadoop:

It is a state of Name node, does not allow changes to the file system and Read-only mode for the Hadoop cluster. Mostly three commands are related to safe mode.

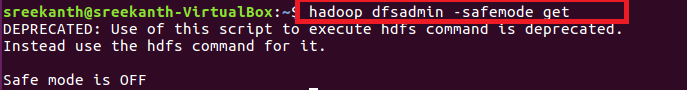

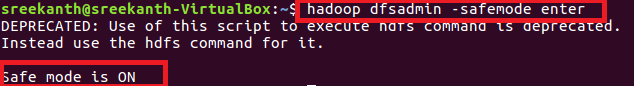

hadoop dfadmin -safemode get:

safemode get means that get status of the safe mode(maintenance mode)

hadoop dfadmin -safemode enter:

In this command exactly meaning that Safe mode is ON.

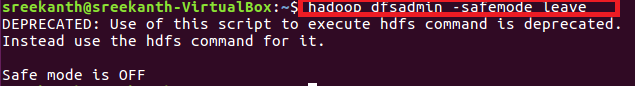

hadoop dfsadmin -safemode leave:

Leave command means that Safe mode is OFF.

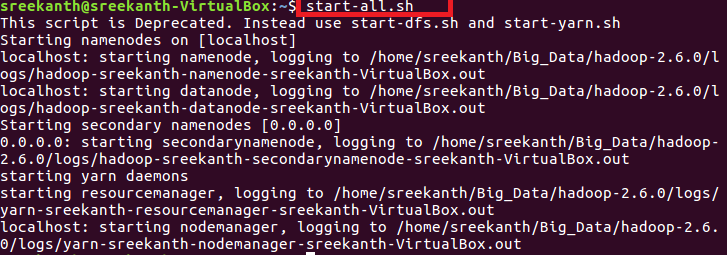

start-all.sh:

Start the all daemons like name node, secondary name node, yarn, data nodes, node manager etc.

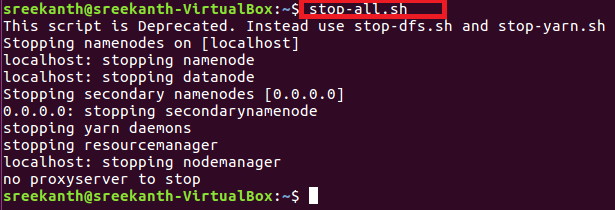

stop-all.sh:

Stop the all daemons like name node, data node, secondary name node, resource manager, node manager, yarn etc.

hadoop fs -copyFromLocal <file 1> <file 2> :

In hadoop environment we need to copy files from local file system to HDFS use this command

![]()

hadoop fs -copyToLocal< file 1> <file2> :

Copy files HDFS to local file system in a hadoop cluster

![]()

hadoop fs -put <file 1> <file 2> :

This command same like as copyFromLocal but small difference is remote location to HDFS

![]()

hadoop fs -get <file 1 > <file 2> :

This command same like as copyToLocal but small difference is HDFS to remote location.

![]()

hadoop fs -setrep -w 5 file:

For set a replication factor manually using below command

![]()

hadoop distcp hdfs://<ip1>/input hdfs://<ip2>/output :

For copy file one cluster to another cluster using below command

![]()

hadoop job -status <job -id >:

To check hadoop jobs status we use this command.

![]()

hadoop job -submit <job – files > :

To submit hadoop job file using this command.

![]()

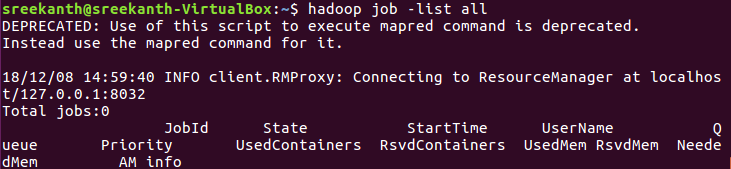

hadoop job -list all :

You want to hadoop jobs so will use this command simply.

hadoop job -kill-task < task – id>:

In hadoop jobs we need to urgently kill the task in processing time. At the time this command is more useful

![]()