Apache Spark Installation

Spark is a framework and in-memory data processing engine. Compare with Hadoop Map Reduce 100 times faster for data processing. Developed in Java, Scala, Python and R languages. Nowadays mostly working and execute the data in Streaming, Machine Learning.

Prerequisite of Spark Installation:

1. Update the packages on Ubuntu using

sudo apt-get update

After entering your password it will update some packages

2. Now you can install the JDK for Java installation

sudo apt-get install default – jdk

Java version must be greater than 1.6 version

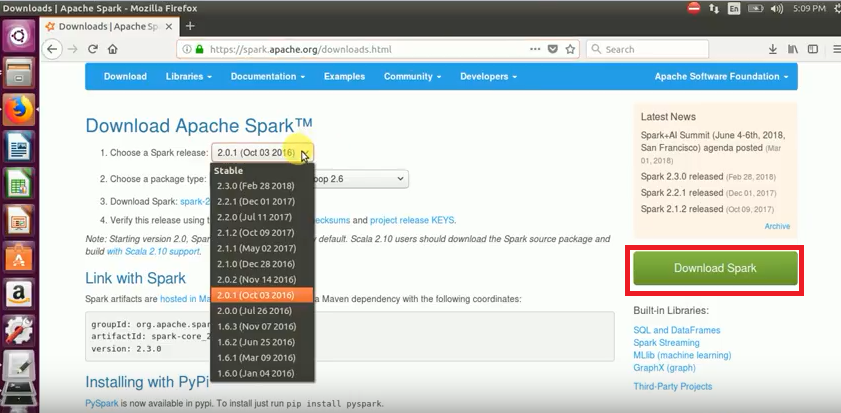

Step 1 : Download spark tar ball from Apache spark official website

Step 2: Tar ball file into your Hadoop directory

Step 3 : After that Extract the Downloaded tarball using below command:

tar -xzvf spark tar ball

Step 4: After tarball extraction , we get Spark directory and Update the SPARK_HOME & PATH variables in bashrc file

using below commands:

export SPARK_HOME=/home/slthupili/INSTALL/spark-2.x.x-bin-hadoop2.x

export PATH=$PATH:$SPARK_HOME/bin

Step 5 : To check the bashrc changes, open a new terminal and type ‘echo $SPARK_HOME command

Step 6: After successfully Installation of Spark, Will check with Spark shell in terminal using below command :

Spark-shell

Step 7: To check spark version and scala version using below commands:

Scala>spark.version

Scala>sc.version