In this blog, we will explain how to resolve the”Failed to construct Kafka consumer” in Big Data environment.

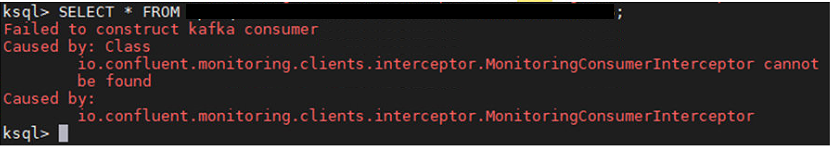

I am trying to set up Confluent Kafka cluster along with Ksql DB for large data sets . Here we are using those data sets for streaming purpose with Apache Kafka. While enter the select query in the Ksql DB CLI getting below error in the console.

Kafka Error:

Failed to construct kafka consumer Caused by: Class io.confluent.monitoring.clients.interceptor.MonitoringConsumerIntercept cannot be found Caused by: io.confluent.monitoring.clients.interceptor.MonitoringConsumerInterceptor

The above error belongs to Monitoring Consumer Interceptor jar file issue. Here we provided two resolution for this issue.

Solution 1:

Step 1: First, find out the “Monitoring Consumer Interceptor” jar file in the below directory:

/usr/share/java/monitoring-interceptors

Step 2: Remove the jar file from above directory and try to login Ksql DB then execute the data base related queries.

Step 3: After that commented the “interceptor.Monitoring Producer and Consumer” the below properties file

ksql-server.properties

Solution 2:

Step 1: Go to Monitoring Consumer Interceptor jar file path

/usr/share/java/monitoring-interceptors

Step 2: Placed with update the jar file with higher version as per Confluent Kafka versions.

Step 3: Then verify the “interceptor.Monitoring Producer and Consumer ” configurations in the ksql server properties file as per your installed Confluent Kafka server in your cluster.

ksql-server.properties

If you didn’t find the Ksql server properties file then grep/find the file from the console.

The above resolutions are very simple to resolve the Confluent Kafka issue on your cluster. Failed to construct kafka consumer is very rare issue in your cluster, this type of issue may cause jar file libraries. Once jar file placed with updated version, it will resolve simple otherwise go with “Solution 1” . For jar file download Confluent Kafka official website or Maven repository website for particular jar file as per your requirement.

In case your cluster belongs multi node then update/remove the jar file from each node other wise it will get same error.