In this article, we will provide a resolution for Spark connection error via JDBC or ODBC using an external database MySQL.

While Connecting Spark and MySQL using JDBC driver getting this error: “ClassNotFoundException: com.mysql.jdbc.Driver”.

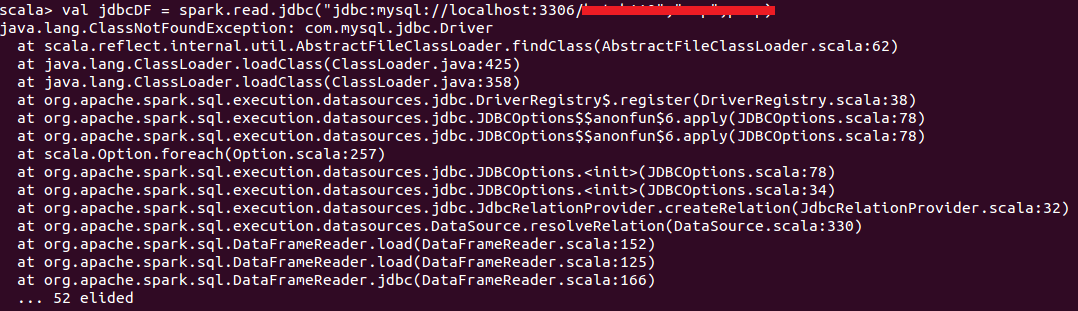

Here is a full error with a screenshot.

Spark Connection Error:

Scala> val jdbcDF = spark.read.jdbc("jdbc:mysql://localhost:3306/new_training", "emp", "prop")

java.lang.ClassNotFoundException: com.mysql.jdbc.Driver

at scala.reflectinternal.util.AbstractFileClassLoader.findClass(AbstractFileClassLoader.scala:62)

at java.langClassLoader.loadClass(ClassLoader.java:425)

at java.langClassLoader.loadClass(ClassLoader.java:425)

at org.apache.spark.sql.execution.datasources.jdbc.DriverRegistry$.register(DriverRegistry.scala:38)

at org.apache.spark.sql.execution.datasources.jdbc.JDBCOption$$annonfun$6.apply(JDBCOptions.scala:78)

at scala.Option.foreach(Option.scala:257)

at org.apache.spark.sql.execution.datasources.jdbc.JDBCOptions<init>(JDBCOptions.scala:78)

at org.apache.spark.sql.execution.datasources.jdbc.JDBCOptions<init>(JDBCOptions.scala:34)

at org.apache.spark.sql.execution.datasources.jdbc.JdbcRelationPRovider.createRelation(JdbcRelationProvider.scala:32)

at org.apache.spark.sql.execution.datasources.DataSource.resolveRelation(DataSource.scala:330)

at org.apache.spark.sql.DataFrameReader.load(DataFrameReader.scala:152)

at org.apache.spark.sql.DataFrameReader.load(DataFrameReader.scala:125)

at org.apache.spark.sql.DataFrameReader.load(DataFrameReader.scala:166)

... 52 elided

Solution:

Here we provided the simple solution for Spark, JDBC connection related issue.

Step 1: Copy “Spark_Custom.jars” folder to “$SPARK_HOME” folder

Step 2: Rename “$SPARK_HOME/conf/spark-defaults.conf.template” file to “$SPARK_HOME/conf.spark-defaults.conf”

Step 3: Add the below jars to “$SPARK_HOME/conf/spark-defaults.conf” file

spark.driver.memory 5g spark.jars /home/sreekanth/hadoop/SPARK/spark-2.0.1-bin-hadoop2.6/Spark_Custom.jars/guava-16.0.1.jar; cassandr-driver-core-3.0.2.jar, jackson-core-2.5.2.jar; jackson-module-scala-2.1.1-bin.jar

After adding Spark and JDBC connection jar files try to connect to RDBMS, we must require the below 4 important points:

1.connection url

2.username & password

3.driver class name

4.client jar

After that check in scala prompt

scala > prop.setProperty(“driver”, “com.mysql.jdbc.Driver”)

scala> val jdbcDF = spark.read.jdbc(“jdbc:mysql://localhost:3306/new_training”, “emp”, “prop”)

In Spark-SQL getting this type of error belongs to Spark and JDBC driver connection along with MySQL server it may be compatible versions of Spark Connection jar file. Here we provided Spark custom jar files into Spark_ Home path and goto Spark Configuration path and add all connection jar files in the directory.

Summary: In the Hadoop cluster, connection errors are common, Spark JDBC connection is also very common error but we need to give Spark related connection jar files in the configuration path and meanwhile check versions. Sometimes getting version compatible related issues. In this cluster we are using MySQL is an external Database so we need to set up with Spark also through JDBC or ODBC driver. Most of the time in Spark-SQL using only JDBC driver for external database connections. In Big Data environment Spark is one of the fastest data processing frameworks for large data sets.