In Apache Sp

Nowadays most familiar functional programming language is Scala. Scala likes a Java but little bit different. When Apache Spark enters into a picture SCALA is most scalable. Here some steps for Scala installation

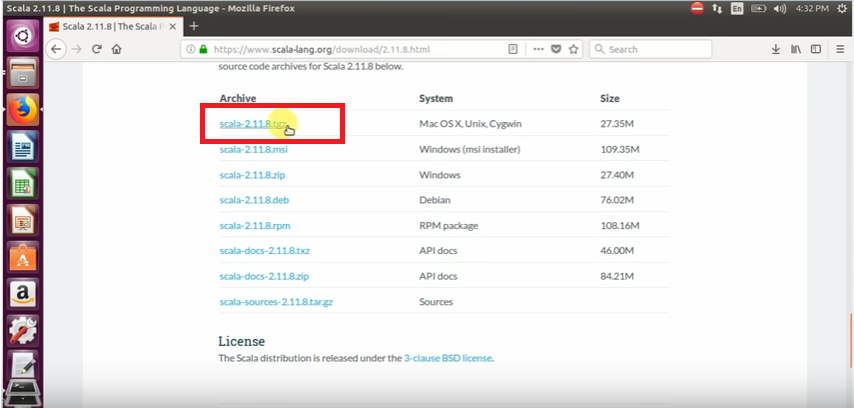

Step 1: Download the Scala tarball from scala official website in your machine.

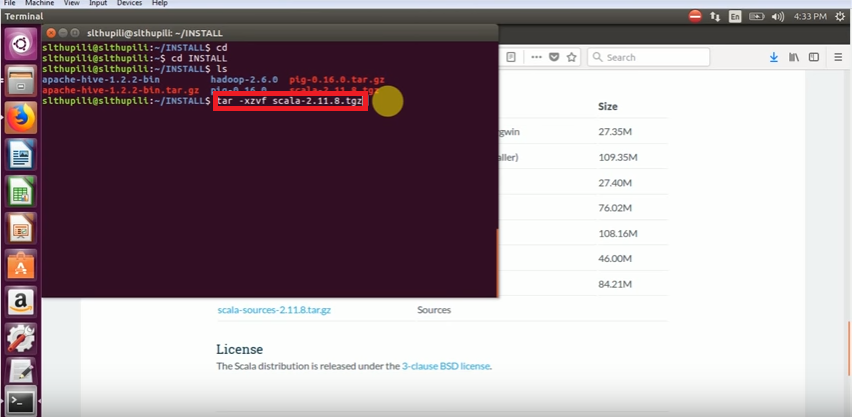

After downloading tarball will put into your Hadoop related path then will follow below step

Step 2: Extract the tar ball using below command:

tar -xzvf scala-2.11.8.tgz for extract the scala tarball

Get Scala file check whether files are there or not. Will go next step

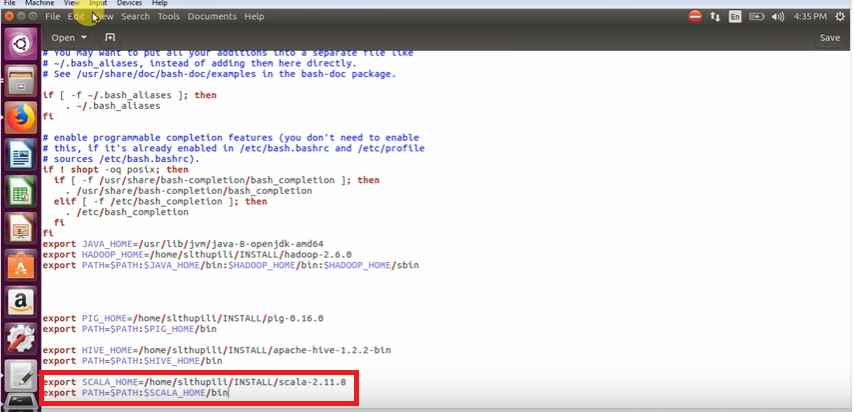

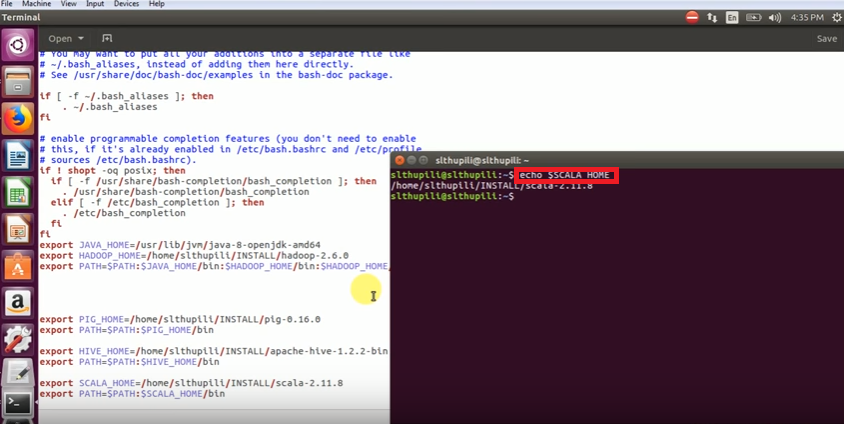

Step 4: Update the SCALA_HOME & PATH variable in bashrc file

After an update, the SCALA_HOME and PATH will automatically environment variables are taken by the .bashrc file

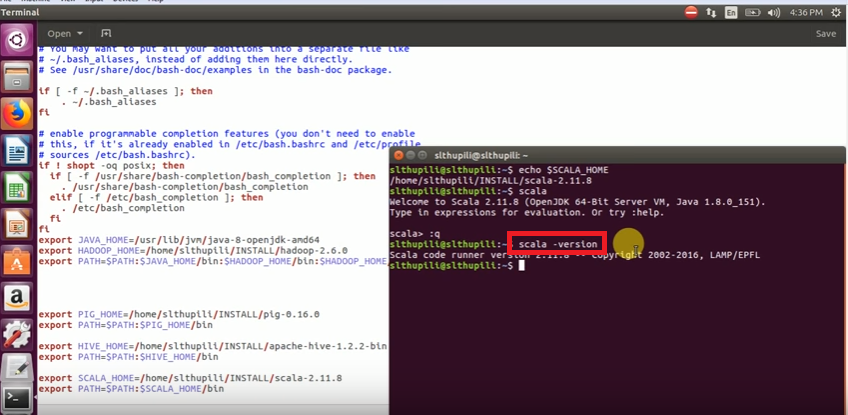

Step 5: After bashrc changes, open a new terminal and check the bashrc changes using ‘ echo $SCALA_HOME ‘ command

In Apache Sp

Open a new terminal and check above command whether scala home is updated or not

Step 6: After that Check Scala version