Apache Spark is an in-memory cluster computing framework for processing and analyzing large amounts of data (Bigdata). Spark provides a simple programming model than that provided by Map Reduce. Developing a distributed data processing application with Apache Spark is a lot easier than developing the same application with Map Reduce. In Hadoop MapReduce provides only two operations for processing the data like “Map” & “Reduce”, whereas Spark comes with 80 plus data processing operations to work with big data application.

While data processing from source to destination. Spark is 100 times faster than Hadoop Map Reduce because it allows in-memory clustering computing, it implements an advanced execution engine.

What is meant by Apache Spark Lazy Evaluation?

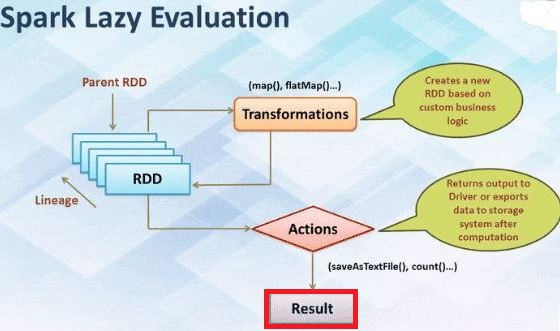

In Apache Spark, two types of RDD operations are

I)Transformations

II) Actions.

We can define new RDDs any time, Apache Spark computes them only in a lazy evaluation. That is, the first time they are used in an action. The Lazy evaluation seems unusual at first but makes a lot of sense when you are working with large data(BigData).

“Simply Lazy Evaluation in Spark means that the execution will not start until an action is triggered. In Apache Spark, the picture of lazy evaluation comes when Spark transformation occurs”.

Consider where we defined a text file and then filtered the lines that include “CISCO” client name if Apache Spark were to load and store all the lines in the file as soon as we wrote like lines = sc.text( file path ). Here Spark Context would waste a lot of o storage space, given that we then immediately filter out many lines. Instead, once Spark seems that whole chain transformation. It can compute the data needed for its result. Hence first() action, Apache Spark scans the file only until it finds the first matching line; it doesn’t even read the whole file.

Advantages Of Lazy Evaluation in Spark Transformations:

Some advantages of Lazy evaluation in Spark in below:

- Increase Manageability: The Spark Lazy evaluation, users can divide into smaller operations. It reduces the number of passes on data by transformation grouping operation.

- Increases Speed: By lazy evaluation in Spark to saves the trip between driver and cluster, speed up the process.

- Reduces Complexities: There are two types of complexities of any operations are Time and Space complexity using Spark lazy evaluation we can overcome both complexities. The action is triggered only when the data is required.

Simple Example:

In Spark, Lazy evaluation below code writes in Scala, who evaluates the expression as it’s declared.

With Lazy:

Scala> Val sparkList = List(1,2,3,4)

Scala> lazy val output = sparkList .map( 1 => 1*10)

Scala> println( output )

Output:

List( 10, 20, 30, 40 )