In this article, we will explain how to resolve PXF agent error in the Hortonwork cluster. Basically, PXF agent is an extension of the Hawq framework for external data.

PXF is down – Tomcat is not running:

I have checked in the Catalina log file it showing below error in the Hortonworks cluster services.

tomcat not responding, re-trying after 1 second ERROR: PXF is down - tomcat is not running

Resolutions:

Step 1: Remove the PXF previous files in the var directory. Goto /var/pxf path like below:

/var/pxf

Step 2: Initialize the pxf services.

/etc/init.d/pxf-service init

Step 3: After initialization, stop the pxf services using the below command.

/etc/init.d/pxf-service stop

Step 4: Start the pxf service using the below command.

/etc/init.d/pxf-service start

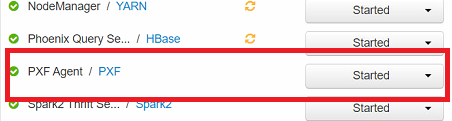

After restarting the PXF agents in the edge node, it showing like PXF is web app is up. In case it is not running then restart the Ambari agents and Ambari servers in the edge node/installation node.

Summary:

In the Big Data environment, I am trying to install Hortonwork distribution with all services. Added Hawq framework including PXF agent in the cluster. After that, restarted PXF agent but not started. So I am checking in the edge node PXF log files, it showing PXF is down – Tomcat is not running. Here is provide a simple solution for the tomcat running in the PXF services.

In Hortonwork/Cloudera cluster Hawq, PXF important for SQL engine for reads/writes data to HDFS with high scalability. Just removed old PXF files, then initialize the pxf files, and restart the pxf services in the Hadoop cluster.