What is Stateful Transformation in Spark Streaming?

Stateful transformation a particular property of Spark Streaming. It enables users to maintain state between micro-batches. In other words, it maintains the state across a period of time can be as long as an entire session of streaming jobs.

Also, allow us to form the session of our data. This is achieved by creating checkpoints on streaming applications through windowing that may not require checkpoints, other operations on windowing.

In other words, these are operations on DStreams that track data across time. It defines, uses some data from the previous batch to generate the results for a new batch.

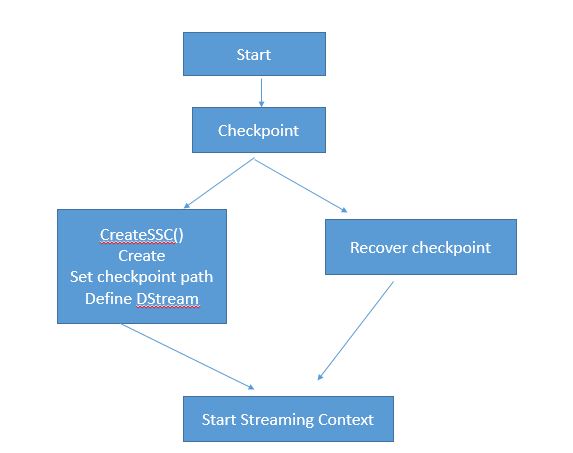

Checkpoint mechanism in Spark:

1. The first time it will create a new Streaming Context

2.In context creation with configure checkpoint with ssc.checkpoint (path)

3. We define Dstream in this function

4. When program restarts after failure it recreates the strong context from the checkpoint.

How to make a CheckPoint directory:

val sparkContext = new SparkContext()

val ssc = new StreamingContext(sparkContext, Duration(5000))

ssc.checkpoint("/path/to/persistent/storage")

DStream updateStateByKey vs mapWithState in Spark Streaming:

updateStateByKey:

updateStateByKey is executed on the total range of keys in DStream. As a result performance of this functioning is corresponding to the size of the state in the Spark Streaming.

mapWithState:

mapWithState is executing only on the set of keys that are available in the last micro-batch. As a result, performance is corresponding to the size of the batch in the Spark Streaming.